Amazon delivery drivers were involved in at least 60 accidents resulting in 13 deaths between 2015 and 2019. And that was before drivers were being forced to make dangerous decisions by in-vehicle AI cameras and crappy algorithms.

Up front: Motherboard ran a horrific article (great reporting, horrifying topic) about Amazon’s third-party driver surveillance system.

The skinny is that thousands of drivers across the US are being monitored by AI systems that actively watch drivers and their surroundings for “events” that trigger negative feedback for the driver.

I’m being careful with how I word this because Amazon wants journalists like me to say that these systems are designed to make deliveries safer.

But Amazon’s clearly instituted these systems to cut liability and pay delivery service partners (DSPs) less. And there doesn’t appear to be any evidence that such systems make deliveries safer.

It actually looks as though they’re distracting drivers and causing them to make erratic, dangerous decisions.

Background: AI is really, really stupid. I cannot express how wrong the general public is about what “they can do with AI these days.”

But that’s not stopping Amazon from misleading people and its partners about the systems’ capability.

Per the aforementioned Motherboard article:

“The Netradyne cameras that Amazon installed in our vans have been nothing but a nightmare,” a former Amazon delivery driver in Mobile, Alabama told Motherboard. “They watch every move we make. I have been ‘dinged’ for following too close when someone cuts me off. If I look into my mirrors to make sure I am safe to change lanes, it dings me for distraction because my face is turned to look into my mirror. I personally did not feel any more safe with a camera watching my every move.”

It’s easy to get lost in the individual morality surrounding this issue, but the big picture effects us all. After all, there are no long term studies on the effects of constant surveillance on drivers. Even if we imagine a world where AI doesn’t make mistakes, there are considerations beyond just driving safely to care about.

What effects will these systems have on driver stress levels? Numerous employees interviewed by Motherboard expressed anxiety over the constant “dinging” from the system.

And this system is far from perfect. Drivers report being dinged for glancing at their side mirrors – something that’s necessary for safe driving. This means drivers have to make a choice between driving safely and earning their full paychecks and bonuses. That’s a tough position to put people in.

And the safety benefits Amazon touts? There’s no evidence that this system makes drivers safer.

Here’s more from the Motherboard piece:

Since Amazon installed Netradyne cameras in its vans … [an Amazon spokesperson] claims that accidents had decreased by 48 percent and stop sign and signal violations had decreased 77 percent. Driving without a seatbelt decreased 60 percent, following distance decreased 50 percent, and distracted driving had decreased 75 percent.

Those statistics sound like facts, but they don’t make a lot of sense. How can they tell exactly what percent of drivers weren’t wearing their seatbelts properly before monitoring systems were installed? How does Amazon know how closely drivers were following other vehicles before external cameras and sensors were installed?

And those aren’t the only questions we should be asking. Because Amazon has no clue what’s going to happen over the long term as more and more people are driving while distracted by faulty AI.

However, I will concede one thing to Amazon’s apparently made-up statistics. That is, I actually believe seatbelt violations have dropped significantly for drivers since the inception of AI monitoring. That’s because drivers were incentivized against wearing their seatbelts before they were being surveilled. Now they have to choose between getting dinged, which will cost them money, or not meeting quotas, which will also cost them money.

Per the Motherboard article:

Drivers say that with their steep delivery quotas and the fact that they are often getting in and out of the truck, buckling and unbuckling their seatbelt dozens of times in a single neighborhood can slow down the delivery process significantly.

But there are even bigger areas of concern. The system flags “events,” many of which drivers don’t know about, and sends them to humans for evaluation. Supposedly, every event is checked by a person.

That may sound fair, but it’s far from it.

Deeper: Let’s set aside for a moment that the people doing the checking are incentivized to meet quotas as well and certainly have their own biases.

Logic dictates that if one group of people generate more events than another group of people, it should be demonstrable that the first group engaged in less safe behaviors than the second. But that’s not how AI works. AI is biased just like humans are.

When an AI is purported to detect “drowsiness” or “distracted driving,” for example, we’re getting a computer’s interpretation of a human experience.

We know for a fact that AI struggles with non-white faces. So it’s almost guaranteed the systems Amazon’s DSPs are using to monitor drivers score differently when evaluating minority groups against white male faces.

This means you could get “dinged” for looking drowsy just because you’re Black or if your face doesn’t look typical enough for the AI. It means white men are likely to generate less dings than other drivers.

And, no matter what you look like, it means you can lose money for being cut off in traffic, checking your side mirrors, or anything else an algorithm can get wrong.

Quick take: Forget the moral issues of surveillance. Let’s just look at this pragmatically. Amazon considers its AI labs among the world’s most advanced. It sold facial recognition systems to thousands of law enforcement agencies. It doesn’t need to outsource this kind of stuff.

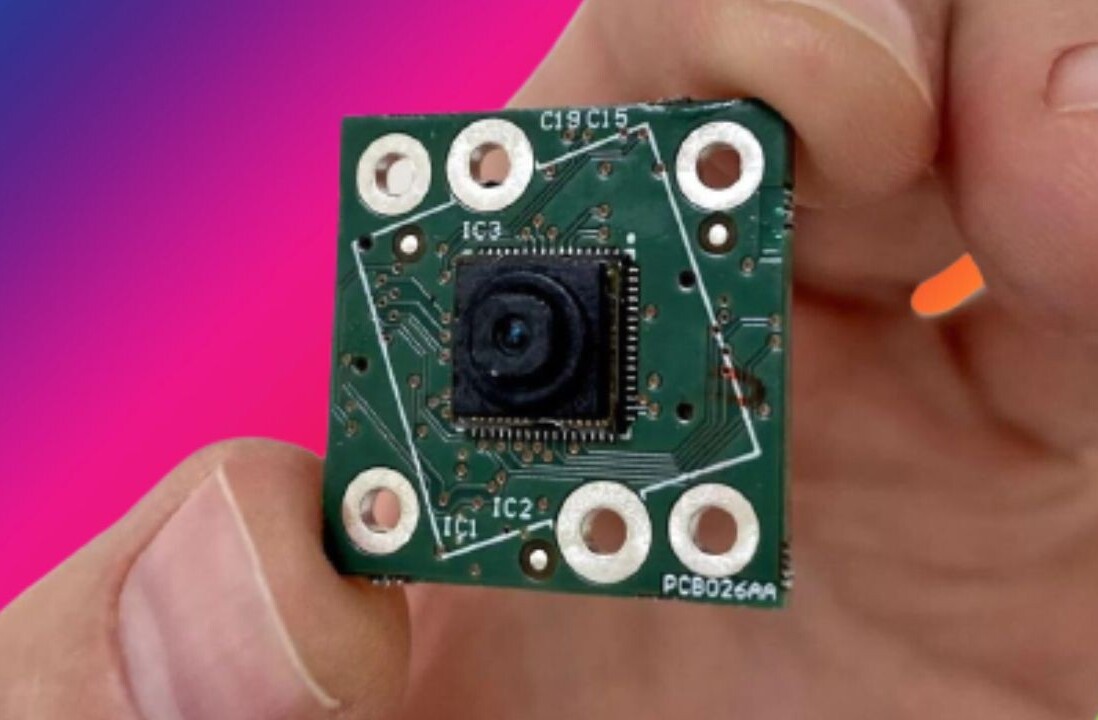

But it does. Not because Netradyne makes better cameras or better AI than Amazon could, but because Amazon doesn’t want liability for any of it. It’s someone else’s gear, someone else’s problem, and all Amazon requires is that its metrics are followed and its quotas are met.

Amazon is conducting large scale psychological experiments on the general public using AI, but it isn’t even doing so in the name of science. It’s just trying to squeeze a few more pennies out of every delivery.

We don’t know what the effects of having a machine telling thousands upon thousands of drivers to conduct themselves unsafely on public roadways every day will be.

Amazon believes it’ll make them more efficient. But so would methamphetamines and monster trucks. There has to be a line somewhere and using algorithms to coerce people into unsafe decisions seems like a good place for it.

Netradyne and Amazon aren’t necessarily making the roads safer or making life easier for their drivers. They’re optimizing arbitrary metrics that, ultimately, only measure one thing: immediate company growth no matter the cost in human lives and livelihoods.

Amazon’s willing and able to conduct these experiments because it views employee well-being through the same financial lens US regulators view public safety.

Get the TNW newsletter

Get the most important tech news in your inbox each week.